To this end, we introduce two new models: i) HiFiC using Squeeze and Excitation (SE) blocks (denoted as HiFiC$_$ in exploiting the spatio-spectral redundancies with channel attention and inter-dependency analysis. To address this problem, in this paper, we adapt the HiFiC spatial compression model to perform spatio-spectral compression of HSIs. However, most of these works aim for spatial compression only and do not consider the spatio-spectral redundancies observed in hyperspectral images (HSIs). Recently, Generative Adversarial Networks (GANs)-based compression models, e.g., High Fidelity Compression (HiFiC), have attracted great attention in the computer vision community. We also show that the combination of quantizers that uses universal quantization for the entropy model and differentiable soft quantization for the decoder is a comparatively good choice for different architectures and datasets.ĭeep learning-based image compression methods have led to high rate-distortion performances compared to traditional codecs.

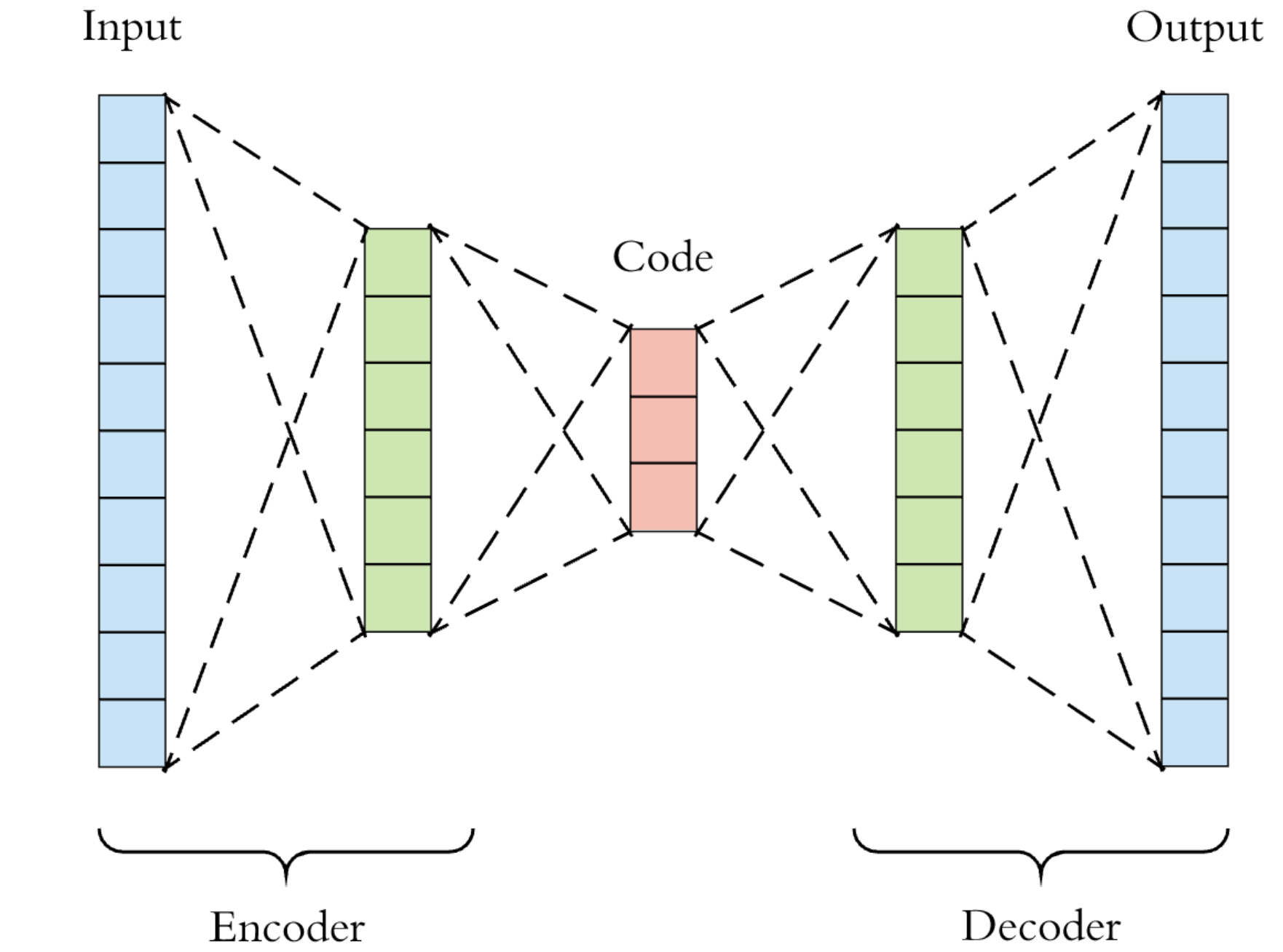

The experimental results reveal that the best approximated quantization differs by the network architectures, and the best approximations of the three are different from the original ones used for the architectures. We conduct experiments using three network architectures on two test datasets. Furthermore, we evaluate possible combinations of quantizers for the decoder and the entropy model, as the approximated quantizers can be different for them. In this study, we comprehensively compare the existing approximations of uniform quantization. However, there have not been equitable comparisons among them. Many methods have been proposed to address the approximations of quantization to obtain gradients. Quantization's gradient is zero, and it cannot backpropagate meaningful gradients. Quantization is a problem encountered in the end-to-end training of deep image compression. In deep image compression, uniform quantization is applied to latent representations obtained by using an auto-encoder architecture for reducing bits and entropy coding.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed